Benefits of running foundation models at the source of data collection

-

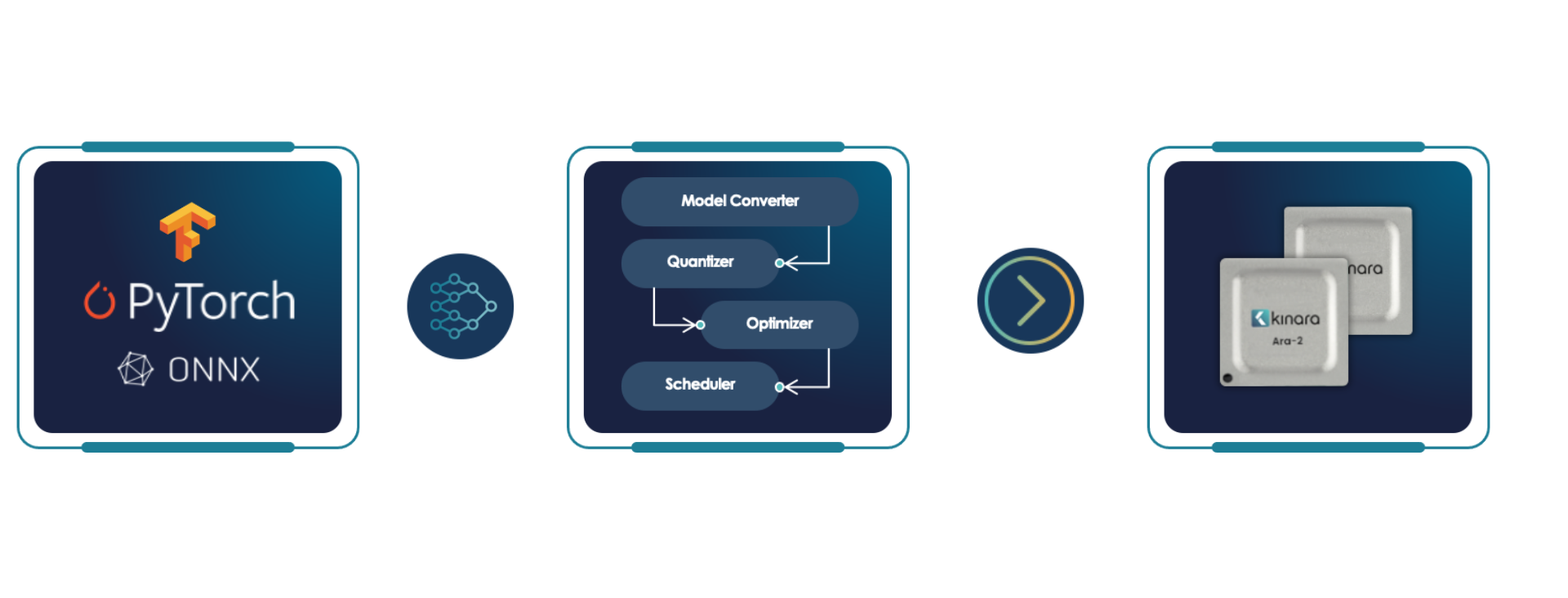

Creates optimal execution plan

AI compiler automatically determines the most efficient data and compute flow for any AI graph

-

Extensible compiler

Converts and schedules models ranging from CNNs to complex vision transformers and Generative AI

-

Readily support new operators

Fully programmable compute engines with a neural-optimized instruction set

-

Support for multiple datatypes

INT8 (Ara-1, Ara-2), INT4 and MSFP16 (Ara-2 only)

-

Efficient dataflow for any network architecture type

Software defined Tensor partitioning and routing optimized for dataflow

-

Utilizes flexible quantization methods

Choose between Kinara integrated quantizer or TensorFlow Lite and PyTorch pre-quantized networks (Ara-2 only)